In my previous post I showed how to create HTTP trigger Powershell Azure Function. Timer triggers have a number of difference uses for Ops people. They can be used to schedule start Azure Automation Runbooks, run a query against a database in a set interval, or check the response time on a website. In this post I’ll show you how you can use timer trigger Azure Functions to post logs to Azure Log Analytics. For this example I’ll be re-using my script to post weather data to Log Analytics.

Disclaimer: Azure Functions are relatively new and Powershell support is considered experimental. Though the team has said they are working to bring it more full fledged as a supported language. Consider this my small effort to increase Powershell support. And finally like anything else in the cloud this information could to be outdated by tomorrow.

Create the Timer Trigger Function

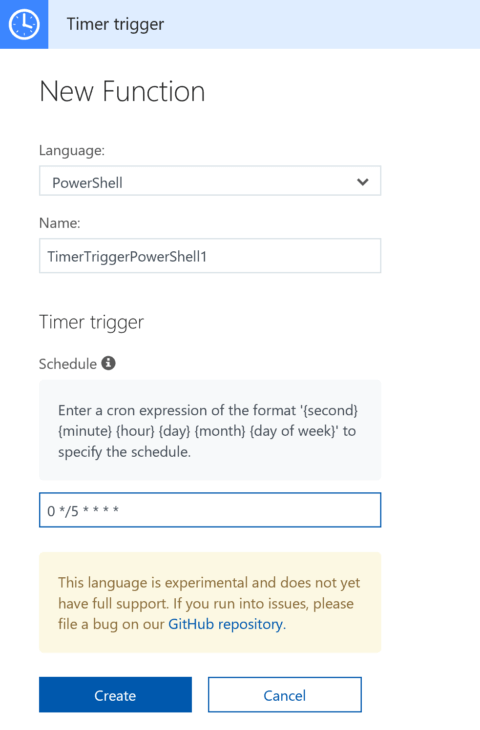

In the function app, select new function and select Powershell Timer Trigger. The schedule option is kind of interesting and does offer much better options than Azure Automation. The default is 5 minutes, meaning the app will run every fives minutes. Don’t worry if you don’t know what you want your time to be because you can always change it later. Also there is a much better explanation of how it works once the function is created.

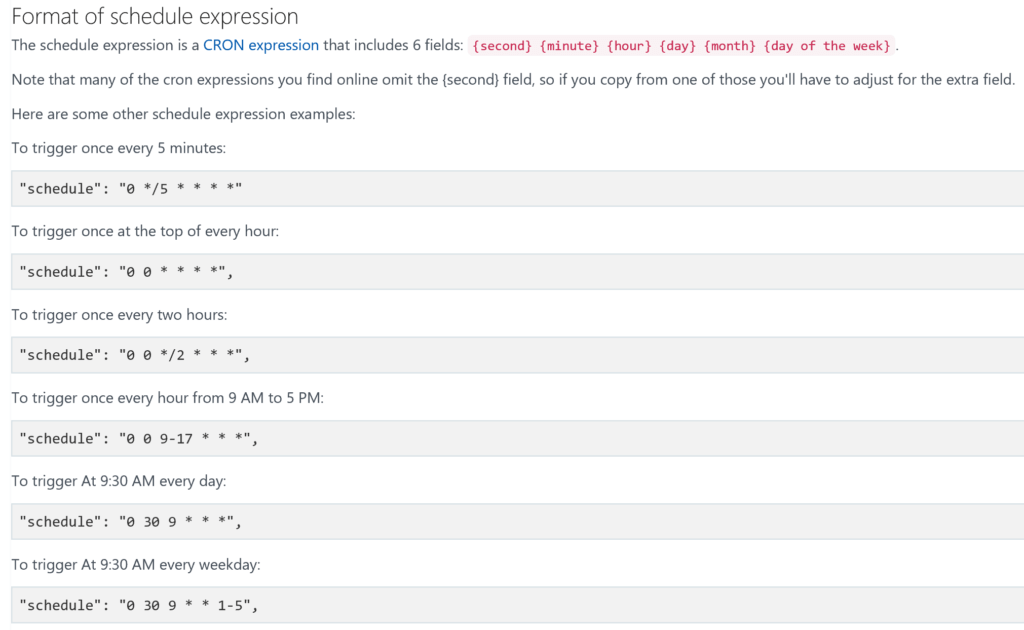

Once created if you go to the Integrate section under the function, expand the documentation and you get this nice explanation.

For my app I’m running it every ten minutes. Which looks like this 0 */10 * * * *.

Post to Log Analytics

I’ll be using the Powershell custom log method I previously posted about, along with the weather script I linked to above. I could import the OMS powershell module, but I don’t think the module will get us anything special over the function code, and it probably takes the same amount of time to run.

Write-Output "PowerShell Timer trigger function executed at:$(get-date)";

# Create the function to create the authorization signature

Function Build-Signature ($customerId, $sharedKey, $date, $contentLength, $method, $contentType, $resource)

{

$xHeaders = "x-ms-date:" + $date

$stringToHash = $method + "`n" + $contentLength + "`n" + $contentType + "`n" + $xHeaders + "`n" + $resource

$bytesToHash = [Text.Encoding]::UTF8.GetBytes($stringToHash)

$keyBytes = [Convert]::FromBase64String($sharedKey)

$sha256 = New-Object System.Security.Cryptography.HMACSHA256

$sha256.Key = $keyBytes

$calculatedHash = $sha256.ComputeHash($bytesToHash)

$encodedHash = [Convert]::ToBase64String($calculatedHash)

$authorization = 'SharedKey {0}:{1}' -f $customerId,$encodedHash

return $authorization

}

# Create the function to create and post the request

Function Post-LogAnalyticsData($customerId, $sharedKey, $body, $logType)

{

$method = "POST"

$contentType = "application/json"

$resource = "/api/logs"

$rfc1123date = [DateTime]::UtcNow.ToString("r")

$contentLength = $body.Length

$signature = Build-Signature `

-customerId $customerId `

-sharedKey $sharedKey `

-date $rfc1123date `

-contentLength $contentLength `

-fileName $fileName `

-method $method `

-contentType $contentType `

-resource $resource

$uri = "https://" + $customerId + ".ods.opinsights.azure.com" + $resource + "?api-version=2016-04-01"

$headers = @{

"Authorization" = $signature;

"Log-Type" = $logType;

"x-ms-date" = $rfc1123date;

"time-generated-field" = $TimeStampField;

}

$response = Invoke-WebRequest -Uri $uri -Method $method -ContentType $contentType -Headers $headers -Body $body -UseBasicParsing

return $response.StatusCode

}

# Replace with your Workspace ID

$CustomerId = $env:LogAnalyticsID

# Replace with your Primary Key

$SharedKey = $env:LogAnalyticsKey

# Specify the name of the record type that you'll be creating

$LogType = "Current_Conditions"

# Specify a field with the created time for the records

$TimeStampField = get-date

$TimeStampField = $TimeStampField.GetDateTimeFormats(115)

# Gets Weather Data via Weather Underground from my Personal Weather Station Outside myself

$URL = $env:WeatherURL

#get weather from weather underground

$JSONResult = Invoke-RestMethod -Uri $URL

#select fields to upload

$weather = $jsonresult.current_observation | Select-Object station_id, temp_f, relative_humidity, dewpoint_f, feelslike_f, feelslike_c, wind_dir, wind_mph, wind_string, uv, weather, precip_1hr_in, precip_today_in, heat_index_f, pressure_in, windchill_f, visibility_mi

$location = $JSONResult.current_observation.display_location

$weather | add-member -name Full -value $location.full -MemberType NoteProperty

$weather | add-member -name City -value $location.city -MemberType NoteProperty

$weather | add-member -name State -value $location.state -MemberType NoteProperty

$weather | add-member -name State_Name -value $location.state_name -MemberType NoteProperty

$weather | add-member -name Country -value $location.country -MemberType NoteProperty

$weather | add-member -name Zip -value $location.zip -MemberType NoteProperty

$weather | add-member -name Magic -value $location.magic -MemberType NoteProperty

$weather | add-member -name Latitude -value $location.latitude -MemberType NoteProperty

$weather | add-member -name Longitude -value $location.longitude -MemberType NoteProperty

$weather | add-member -name Elevation -value $location.elevation -MemberType NoteProperty

$weather = ConvertTo-Json $weather

# Submit the data to the API endpoint

Post-LogAnalyticsData -customerId $customerId -sharedKey $sharedKey -body ([System.Text.Encoding]::UTF8.GetBytes($weather)) -logType $logType

$response = invoke-restmethod -uri $env:CosmosTrigger -Method Post -Body $weather

return $response.StatusCode

Some things to note, as I spoke about in my previous Azure Function post I used the application settings to put my Log Analytics workspace and primary keys and called them by name with $env: in front of them. I also did the same for my Log Analytics URI. Some changes were made to the JSON I was submitting from my other weather post. I decided I wanted more fields in Log Analytics.

I have been using this method for a little over a month now, and its a great method. Previously I was running this script as a scheduled task on my laptop. Which was inconsistent if my laptop was rebooting or went to sleep. Whats really interesting is that if you have the Application Insights connection enabled, it costs more for the data ingestion into App Insights, than it does to run the actual Azure Function. Albeit we’re talking about mere pennies here, but its still exponentially more.

I have also added this code to a github repo if you’re into that https://github.com/scautomation/Azure-Function-Post-Log-Analytics

3 thoughts on “Timer Trigger Azure Functions: Post logs to Log Analytics”

Comments are closed.